Designing for Action in AI Systems

We have moved beyond the phase where purely generative text suffices. The current challenge in AI development is "action capability" systems that not only describe a plan but also execute it, monitor the results, and iteratively refine it.

Building an agent-based system requires a fundamental architectural shift. It's no longer a simple request-response cycle but a potentially infinite, stateful loop of inferences and actions. This leads to complexities regarding reliability, loop termination, and state management that are not addressed by traditional software patterns.

Choosing the right design pattern is crucial to avoid unwieldy and difficult-to-maintain source code later on. This article describes common architectural patterns for agent-based AI and provides a framework for selecting the appropriate pattern for your use case.

The Core Loop

Before we delve into specific patterns, it's essential to understand the fundamental loop that defines an agent. Unlike a standard Retrieval-Augmented Generation (RAG) pipeline, an agent maintains an internal state and executes a cycle:

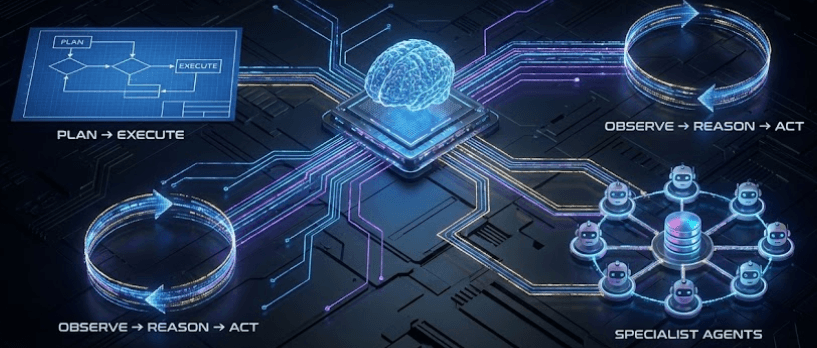

Observe: The agent intakes the user query and the current environmental state.

Reason: The LLM determines the next necessary step, deciding which tools (if any) are required.

Act: The agent executes a tool (e.g., runs an SQL query, calls an API, searches the web).

Reflect: The agent receives the output of the action and updates its internal state.

Repeat: The loop continues until the agent determines the original goal is met.

The architectural patterns below differ primarily in how they manage the "Reason" and "Reflect" stages of this loop.

Pattern 1: The Iterative Tool-User (ReAct)

This is the most common foundational pattern. It combines reasoning and acting in interleaved steps. The model is prompted to "think" about what to do next, execute a single action, observe the output, and then "think" again.

How it works

The system prompts the LLM with the current objective and a list of available tools. The LLM outputs a "thought" and a "tool call." The system executes the tool call and feeds the output back into the next prompt as an "observation."

When to use it

This pattern is highly effective for multi-step tasks where the necessary information to complete step N+1 is only available after completing step N. It is flexible and relatively easy to implement using frameworks like LangChain or Haystack.

Best for:

Tasks requiring sequential discovery (e.g., debugging a specific error message).

General-purpose assistants with a moderate number of tools (5–15).

Drawbacks:

It can get stuck in loops if the reasoning step fails repeatedly.

Latency is high because it requires a full LLM inference call for every single step.

Pattern 2: The Plan-and-Execute Architect

For complex objectives, iterative reasoning can lose the "big picture." The Plan-and-Execute pattern decouples reasoning from action.

How it works

This architecture uses two distinct phases (and often two distinct prompts or models):

The Planner: An LLM analyzes the user request and generates a complete, high-level directed acyclic graph (DAG) of steps required to solve the problem. No actions are taken yet.

The Executor: A separate component (often a simpler loop) takes the plan and executes each step sequentially. It reports back the status of each step.

If execution fails, control returns to the Planner to generate a revised plan based on the new failure context.

When to use it

Use this when the task requires complex coordination or when the steps are relatively independent and can be defined upfront. It reduces token usage during the execution phase because the model doesn't need to re-derive the entire strategy at every step.

Best for:

Complex workflows with known dependencies (e.g., "Onboard a new employee across Jira, Slack, and AWS").

Tasks where latency in the initial planning phase is acceptable for faster execution later.

Drawbacks:

If the initial plan is flawed due to hallucination, the entire execution will fail.

It is less adaptive to dynamic environments than the Iterative Tool-User.

Pattern 3: The Multi-Agent Collaboration (Swarm)

As the breadth of required domain knowledge increases, a single general-purpose LLM struggles to maintain context and select the right tools effectively. The Multi-Agent pattern solves this through specialization.

How it works

Instead of one agent with 50 tools, you design five distinct agents, each with 10 specialized tools and a specific persona (e.g., "Database Administrator," "Frontend Developer," "QA Tester").

A "supervisor" or "orchestrator" agent sits at the top layer. It receives the user request and routes tasks to the appropriate specialist agents. The specialists perform their tasks and report back to the supervisor.

When to use it

This is necessary when the problem domain is too vast for a single prompt context window, or when you need to mix different models (e.g., using GPT-4 for complex reasoning and a faster, cheaper model for simple lookups).

Best for:

Highly complex enterprise workflows crossing multiple domains.

Situations requiring distinct personas to "debate" or review each other's work to ensure accuracy.

Drawbacks:

High implementation complexity. Inter-agent communication adds significant overhead and potential failure points.

Costs increase rapidly as multiple agents confer on a single task.

A framework for choosing

Selecting the right pattern depends on balancing task complexity against required reliability and latency.

| Feature | Iterative Tool-User | Plan-and-Execute | Multi-Agent |

| Task Complexity | Low to Medium | Medium to High | Very High |

| Environment | Dynamic / Unknown | Static / Known | Varied |

| Latency | High (per step) | High initial, Lower execution | Very High (overall) |

| Implementation | Simple | Moderate | Complex |

| Primary Risk | getting stuck in loops | Bad initial plan | Coordination failure |

Start simply. Initially, use the iterative tool-user pattern. Only switch to the plan-and-execute pattern if the agent loses sight of long-term goals. Only use multi-agent collaboration if you encounter limitations in context window size or tool selection precision due to oversaturation.

— Manuela Schrittwieser, Full-Stack AI Dev 🧑💻 & Tech Writer